The Measurement Crisis

There Is a Number That Should Concern Every Leader

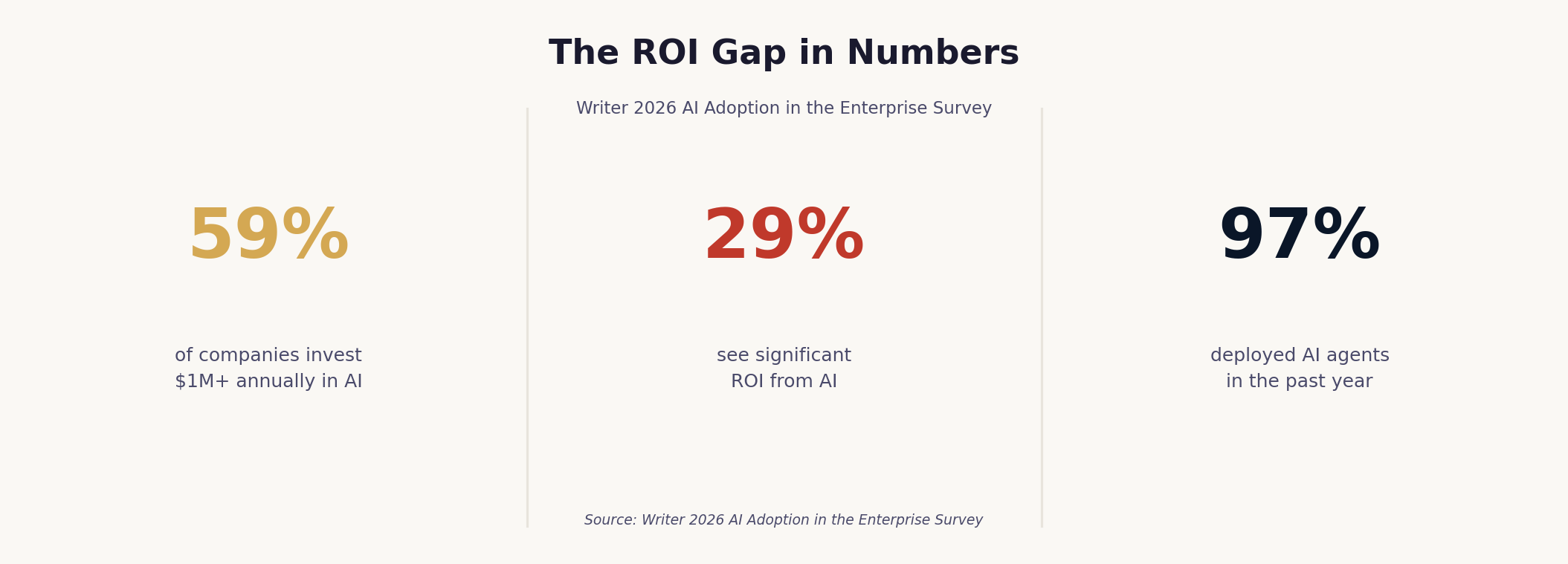

There is a number that should concern every leader who has invested in AI: 29%.

According to the 2026 AI Adoption in the Enterprise survey by Writer, 59% of companies are investing at least $1 million annually in AI technology. Ninety-seven percent of executives deployed AI agents in the past year. And yet only 29% are seeing significant returns from their investment. The same technology. Vastly different outcomes.

This is not a technology crisis. It is a measurement crisis.

When Microsoft's corporate vice president Alysa Taylor published a piece in TIME this week — on the same day the Stanford 2026 AI Index confirmed that enterprise AI adoption has reached near-universal levels — the diagnosis was clear: "AI isn't failing to deliver value. Organisations are struggling to see it because they're measuring it the wrong way."

The leaders pulling ahead are not those with better tools or bigger budgets. They are those who have fundamentally changed how they define, track, and communicate the value of AI. The rest are stuck in what the industry has started calling "pilot purgatory" — a state of perpetual experimentation that never quite becomes transformation.

"AI isn't failing to deliver value — organisations are struggling to see it because they're measuring it the wrong way." — Alysa Taylor, Microsoft, TIME, May 2026

The Productivity Trap

The Default Measurement Model Is Seductive — and Almost Entirely Wrong

The default measurement model for AI is seductive in its simplicity: count the hours saved, calculate the cost reduction, present the number to the CFO. It is familiar, it is auditable, and it is almost entirely wrong.

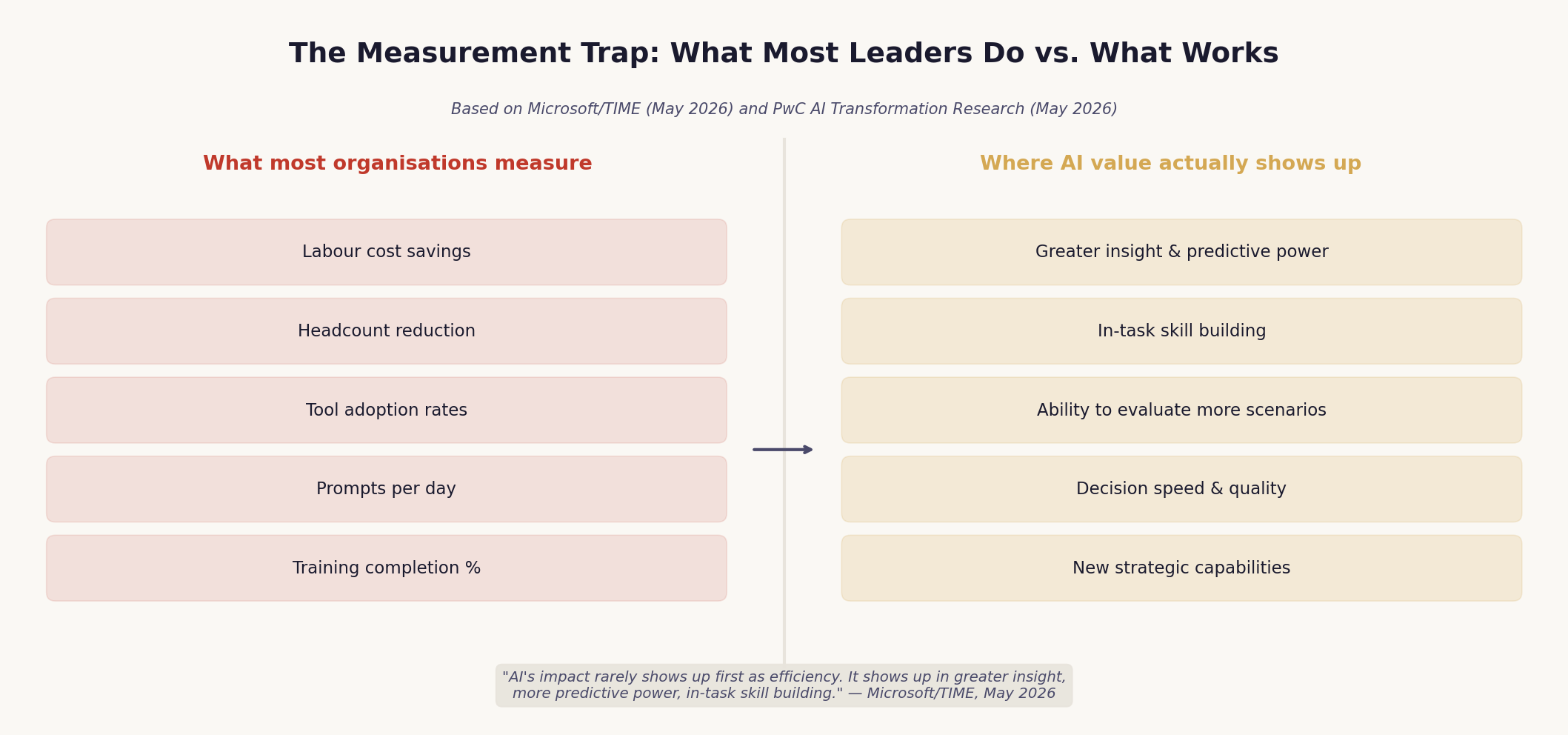

The problem is not that productivity metrics are irrelevant. It is that they capture only the first and most visible layer of AI's impact — and often not even that layer accurately. When organisations measure AI ROI through the lens of cost savings and headcount reduction, they are looking for value in the wrong place at the wrong time.

AI's impact, as Taylor writes, "rarely shows up first as efficiency. It shows up in greater insight, more predictive power, in-task skill building, and the ability to evaluate more scenarios before acting." These gains do not fit neatly into traditional metrics. They do not map cleanly into cost reduction. And because they are harder to count, they are systematically undercounted — which means the case for AI looks weaker than it actually is, initiatives stall, and organisations retreat to the safety of the pilot.

PwC's May 2026 research on AI transformation adds a structural dimension to this problem. Their analysis of high-performing AI organisations found that "AI value is uneven" — concentrated in specific domains and workflows rather than distributed evenly across the enterprise. Companies that pursue enterprise-wide AI mandates — deploying broadly in hopes of capturing value everywhere — consistently underperform companies that make concentrated bets in high-impact areas. The measurement problem compounds this: when you deploy broadly and measure shallowly, you see modest returns everywhere and transformative returns nowhere.

The Adoption Metric Illusion

Activity Metrics Tell You Something Is Happening. Not Whether It's Working.

When outcome measurement fails, organisations default to activity measurement. Adoption rates. Tool usage. Number of prompts per day. Percentage of employees who have completed AI training. These metrics are easy to collect, easy to present, and almost meaningless as indicators of business value.

The Writer 2026 survey captures the consequence of this substitution with striking clarity: 75% of executives admit their AI strategy is "more for show than actual guidance." This is not a confession of cynicism — it is a description of what happens when organisations measure activity instead of outcomes. The strategy becomes a signal to the board that AI is being taken seriously, rather than a genuine guide to where and how AI creates value.

The distinction matters enormously for leadership. Activity metrics tell you something is happening. They do not tell you whether it is working. A sales team that uses an AI tool every day but does not improve win rates has high adoption and zero ROI. A finance team that uses AI for one specific workflow — say, risk identification — and shifts from reactive audits to proactive risk management has low adoption and transformative ROI.

The BCG "Split Decisions" survey, published on 4 May 2026 and based on 625 global leaders, reveals the organisational consequence of this confusion. Sixty-one percent of CEOs say their boards are rushing AI transformation. More than half say AI hype is distorting boardroom judgment. CEOs estimate that 35% of their performance evaluation depends on achieving AI ROI — but boards estimate only 27%. The gap between perceived expectations and formal accountability is not just a communication problem. It is a measurement problem. When no one agrees on what success looks like, pressure accumulates without direction.

"61% of CEOs say their boards are rushing AI transformation. More than half say AI hype is distorting boardroom judgment." — BCG Split Decisions Survey, 625 global leaders, May 2026

What Good Measurement Looks Like

Define the Outcome First. Then Work Backward.

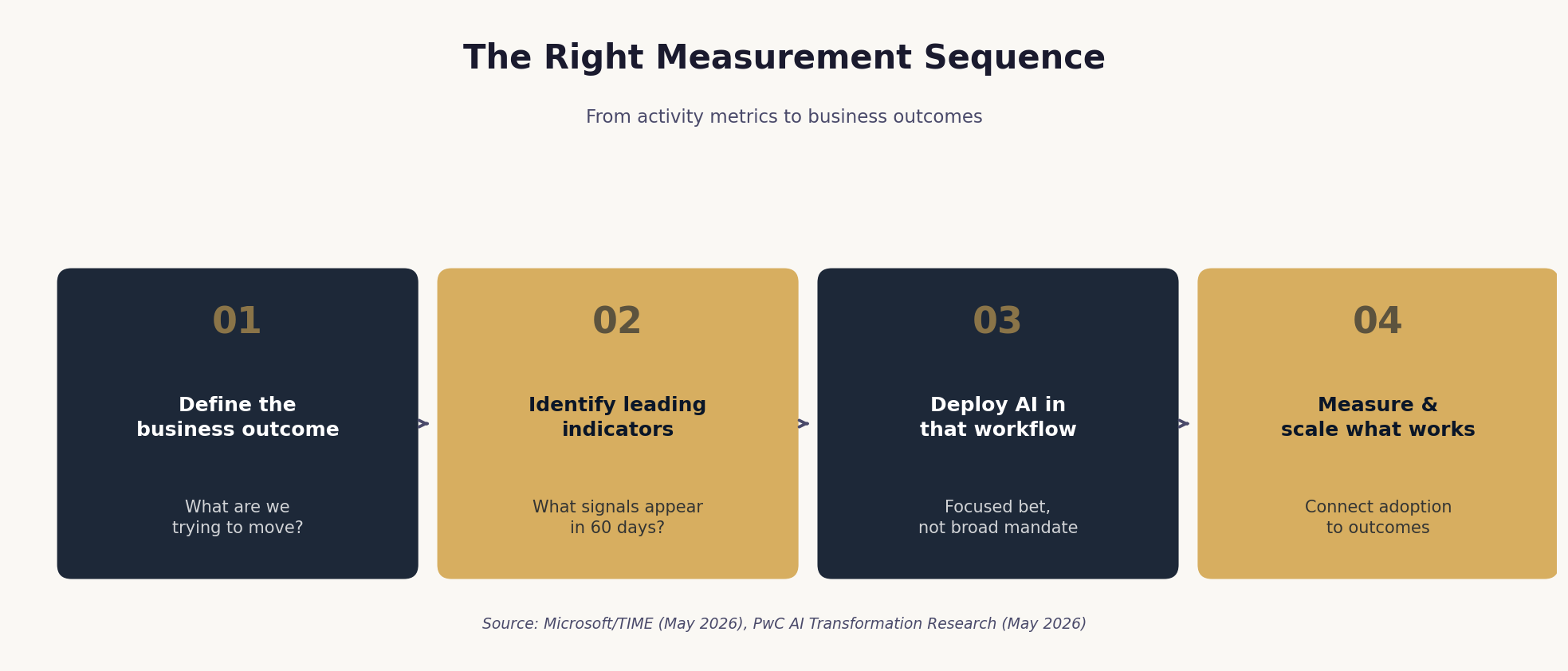

The organisations getting real ROI from AI share a common discipline: they define the outcome first, then work backward to where AI can make a meaningful difference.

This sounds obvious. It is not widely practised. In most organisations, the AI deployment decision precedes the outcome definition. A tool is selected, a pilot is launched, and the measurement framework is built afterward — often to justify a decision already made rather than to guide one being made. The result is what Taylor calls "pockets of activity but little business impact."

The right sequence is the reverse. Start with the business outcome that matters most — increasing revenue per salesperson, improving customer retention, accelerating product development, reducing regulatory risk. Then ask: where in the workflow that drives this outcome can AI make a meaningful difference? Then deploy there, and measure against that outcome from day one.

This approach has three practical implications for leaders. First, it requires distinguishing between leading indicators and lagging indicators. Revenue growth and margin improvement are lagging indicators — they take time to appear, and waiting for them to validate an AI investment is a recipe for abandoning initiatives too early. The leading indicators — time spent with customers, pipeline quality, decision speed, error rates — appear much sooner and, if chosen well, predict the lagging outcomes.

Second, it requires making the signals that matter visible and legitimate. In knowledge work, far less is visible than in manufacturing. Tool usage can be tracked; how decisions improve is much harder to see. The people closest to the work often see the impact first — in speed, quality, and customer engagement — but those observations are dismissed because they do not fit traditional reporting models. Leaders who create channels for these signals, and treat them as legitimate evidence of value, build the feedback loops that allow AI initiatives to scale.

Third, it requires resisting the pressure to show ROI primarily through labour cost savings. This is the most politically difficult implication, because the pressure is real and comes from boards and CFOs who are comfortable with that model. But as PwC's research shows, the organisations that focus on cost reduction as the primary AI metric consistently underperform those that focus on capability addition — the ability to do things that were previously impossible, not just to do existing things more cheaply.

The Super-User Signal

The Metric That Actually Predicts Transformation

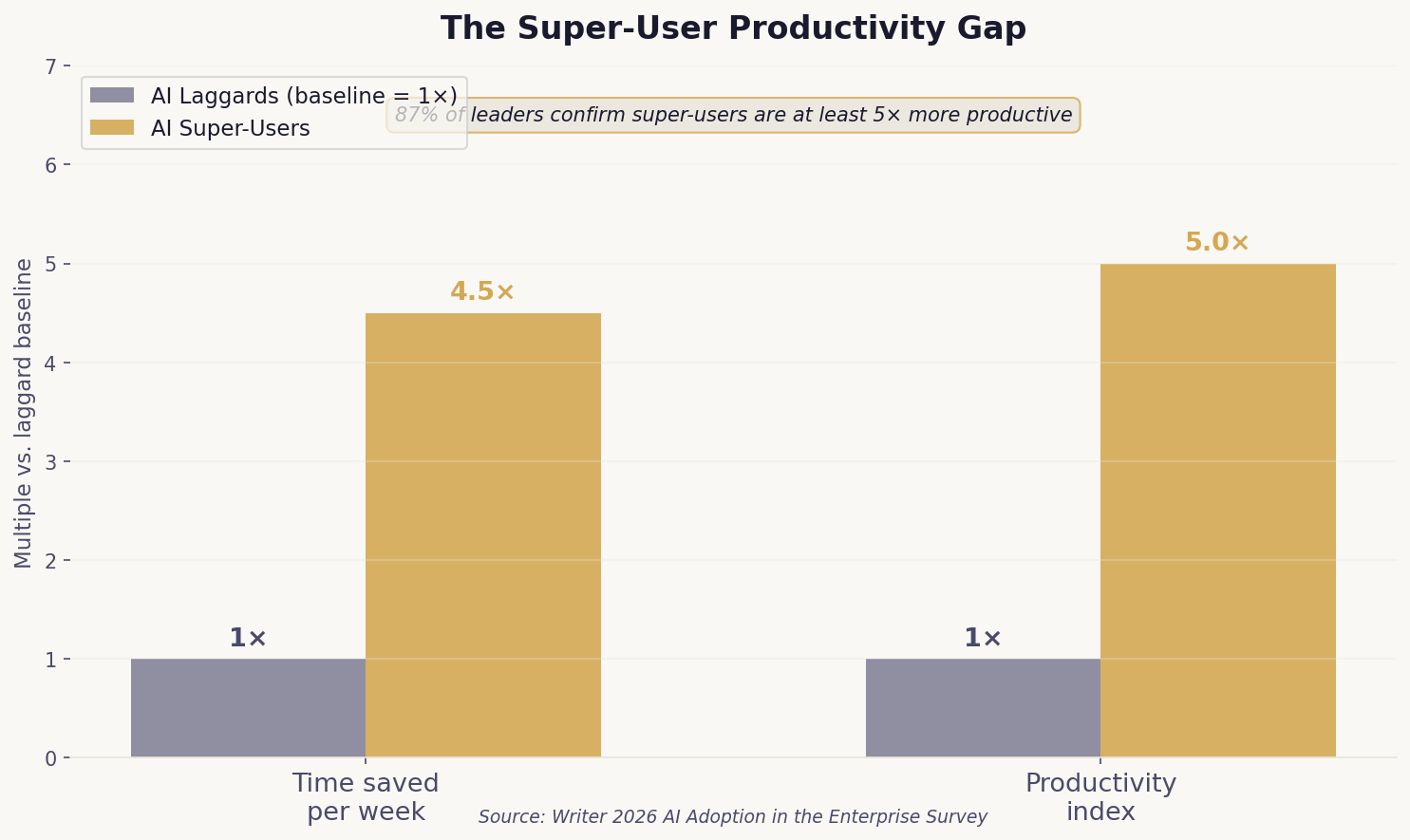

There is one metric from the Writer 2026 survey that deserves particular attention from leaders: the productivity gap between AI super-users and AI laggards.

Super-users — employees who are deeply comfortable with AI and use it across complex tasks — save nearly 4.5 times as much time per week as laggards. Eighty-seven percent of leaders confirm that their company's AI super-users are at least 5 times more productive than those who rarely use AI beyond basic prompts.

This gap is not primarily a training problem. It is an architecture problem. The organisations generating super-users are not those with the best AI tools — they are those that have redesigned workflows around AI capabilities, created communities of practice where advanced usage spreads, and measured and rewarded the behaviours that drive deep adoption rather than surface-level compliance.

The implication for measurement is direct: if you are measuring average adoption across the organisation, you are measuring the wrong thing. The signal that predicts transformation is not average usage — it is the depth of usage among your highest-performing cohort, and the rate at which that depth is spreading. An organisation where 10% of employees are genuine super-users and that percentage is growing is on a transformation trajectory. An organisation where 80% of employees use AI occasionally for basic tasks is not.

The Measurement Mandate

The Metric Trap Is Not Inevitable

The measurement crisis in AI is ultimately a leadership crisis. Not because leaders are failing to pay attention — they are paying enormous attention — but because the frameworks they are using to evaluate AI were designed for a different kind of investment.

Traditional ROI frameworks assume that value is created by substituting technology for labour, that the benefits are visible quickly, and that they can be captured in financial metrics. AI does not work this way. Its most significant value is created by augmenting human judgment, by enabling decisions that were previously impossible, and by building organisational capabilities that compound over time. These benefits are real, but they are invisible to traditional measurement frameworks.

The leaders who will navigate this transition successfully are those who are willing to do the harder work: defining success in terms of business outcomes rather than technology adoption, building measurement systems that capture leading indicators alongside lagging ones, and making the case to their boards and CFOs for a more sophisticated understanding of what AI value looks like.

As the BCG survey makes clear, 80% of both CEOs and board members agree that prospective board members should be required to demonstrate a measurable understanding of how AI can reshape their industry. That is a remarkable level of consensus. The question is whether that consensus translates into the kind of AI literacy that changes how value is defined and measured at the top of organisations — or whether it remains, like so many AI strategies, more for show than for guidance.

The metric trap is not inevitable. But escaping it requires a deliberate choice: to measure what matters, not what is easy to count.

"The advantage won't come from adoption alone — but from how well organisations connect that adoption to real business impact, and how quickly they learn and scale what works." — Alysa Taylor, Microsoft

THREE QUESTIONS FOR YOUR NEXT LEADERSHIP MEETING

What is the one business outcome you most want AI to move in the next 12 months? Have you defined the leading indicators that will tell you, in 60 days, whether you are on track?

Are you measuring AI adoption (activity) or AI impact (outcomes)? What would you need to change to shift from one to the other?

Who are your AI super-users? What are they doing differently — and how fast is that spreading to the rest of the organisation?

| Finding | Source | Date |

|---|---|---|

| 59% of companies invest $1M+ annually in AI | Writer 2026 AI Adoption Survey | April 2026 |

| Only 29% see significant ROI | Writer 2026 AI Adoption Survey | April 2026 |

| 97% of executives deployed AI agents in past year | Writer 2026 AI Adoption Survey | April 2026 |

| 75% of executives say AI strategy is 'more for show' | Writer 2026 AI Adoption Survey | April 2026 |

| AI super-users save 4.5× more time per week | Writer 2026 AI Adoption Survey | April 2026 |

| 87% of leaders say super-users are 5× more productive | Writer 2026 AI Adoption Survey | April 2026 |

| 61% of CEOs say boards are rushing AI transformation | BCG Split Decisions Survey (n=625) | May 4, 2026 |

| CEOs: 35% of their evaluation depends on AI ROI | BCG Split Decisions Survey | May 4, 2026 |

| AI value is uneven — concentrated bets outperform | PwC AI Transformation Research | May 2026 |